|

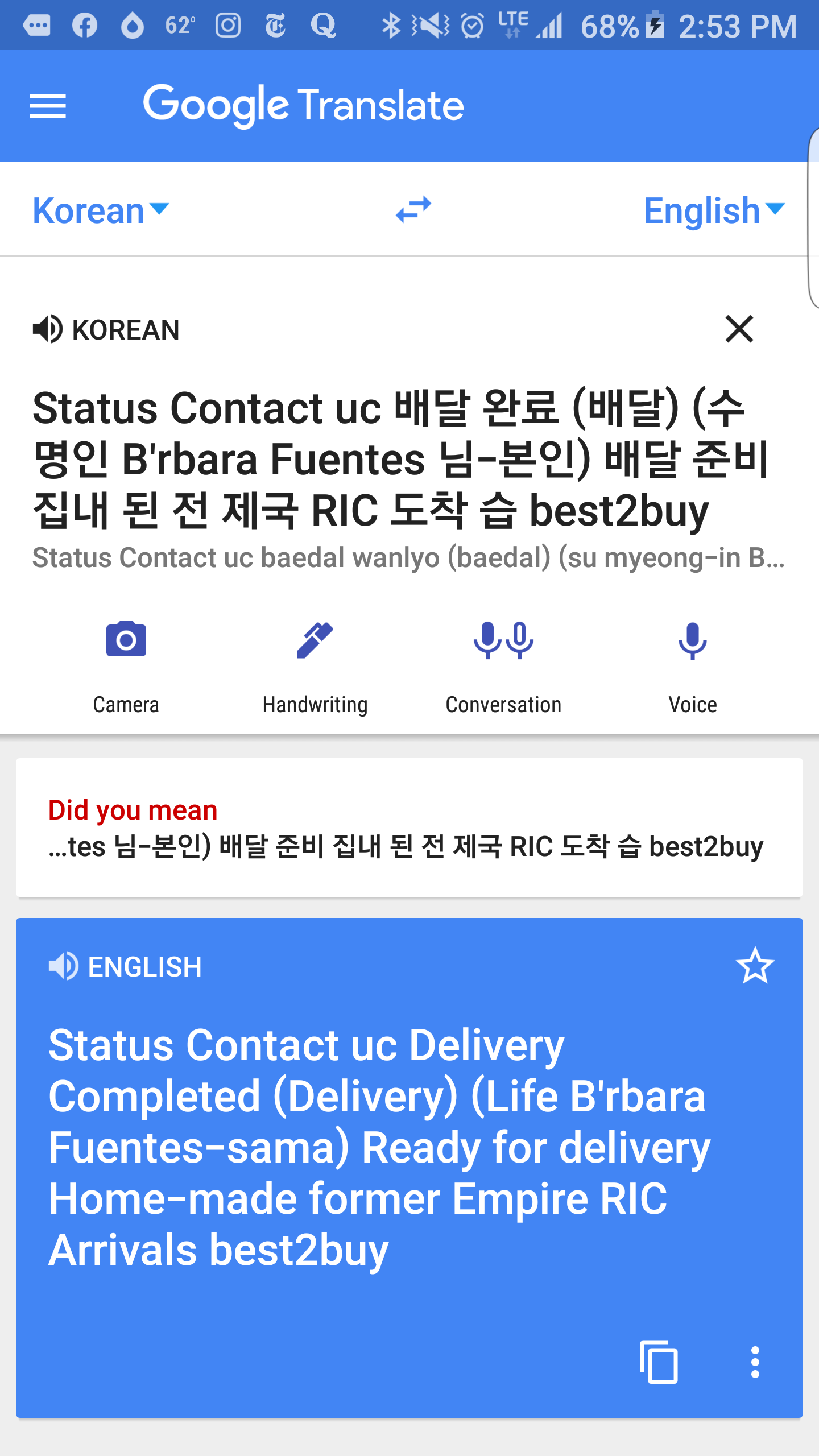

In addition to improving translation quality, our method also enables “Zero-Shot Translation” - translation between language pairs never seen explicitly by the system. Our proposed architecture requires no change in the base GNMT system, but instead uses an additional “token” at the beginning of the input sentence to specify the required target language to translate to.

In “ Google’s Multilingual Neural Machine Translation System: Enabling Zero-Shot Translation”, we address this challenge by extending our previous GNMT system, allowing for a single system to translate between multiple languages. However, while switching to GNMT improved the quality for the languages we tested it on, scaling up to all the 103 supported languages presented a significant challenge. In September, we announced that Google Translate is switching to a new system called Google Neural Machine Translation (GNMT), an end-to-end learning framework that learns from millions of examples, and provided significant improvements in translation quality.

With neural networks reforming many fields, we were convinced we could raise the translation quality further, but doing so would mean rethinking the technology behind Google Translate.

To make this possible, we needed to build and maintain many different systems in order to translate between any two languages, incurring significant computational cost. In the last 10 years, Google Translate has grown from supporting just a few languages to 103, translating over 140 billion words every day.

Posted by Mike Schuster (Google Brain Team), Melvin Johnson (Google Translate) and Nikhil Thorat (Google Brain Team)

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed